Task-oriented Visual Pose Estimation

Vision-based, ML-driven 6D object pose estimation for manipulation tasks

As a contribution to the Horizon Europe project: euROBIN, I developed a reusable, modular object pose estimation software component, which is publicly available in the tum-tb-perception Github repository. This work was presented in (Abdelrahman et al., 2025) at the European Robotics Forum (2025) and was nominated for a best paper award. The software component was also used in a Robocup-style cooperative euROBIN competition by our team (TUM-MIRMI) as well as other competitors, particularly for a set of robot manipulation challenges on a “Taskboard” benchmark platform.

Implementation

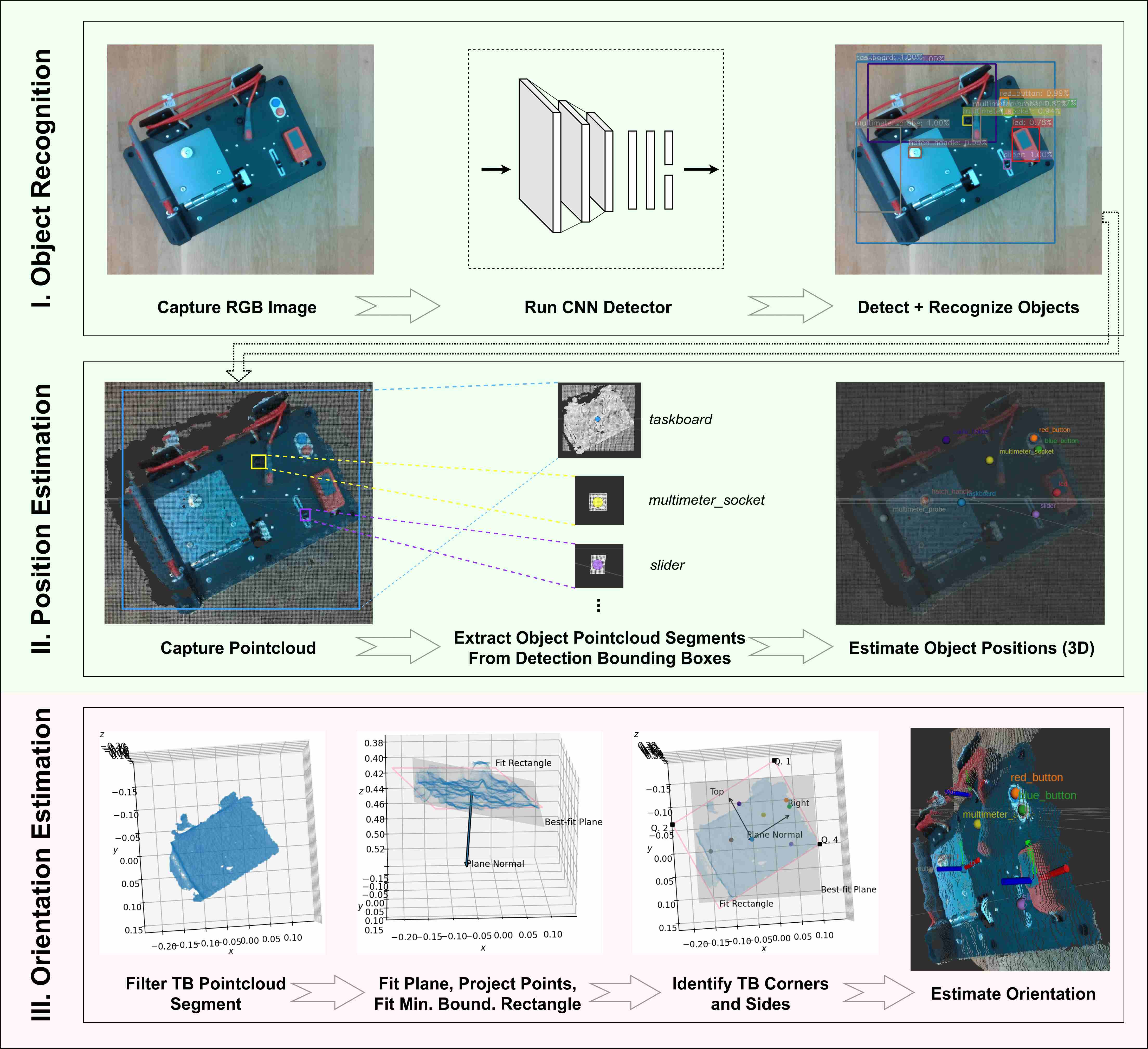

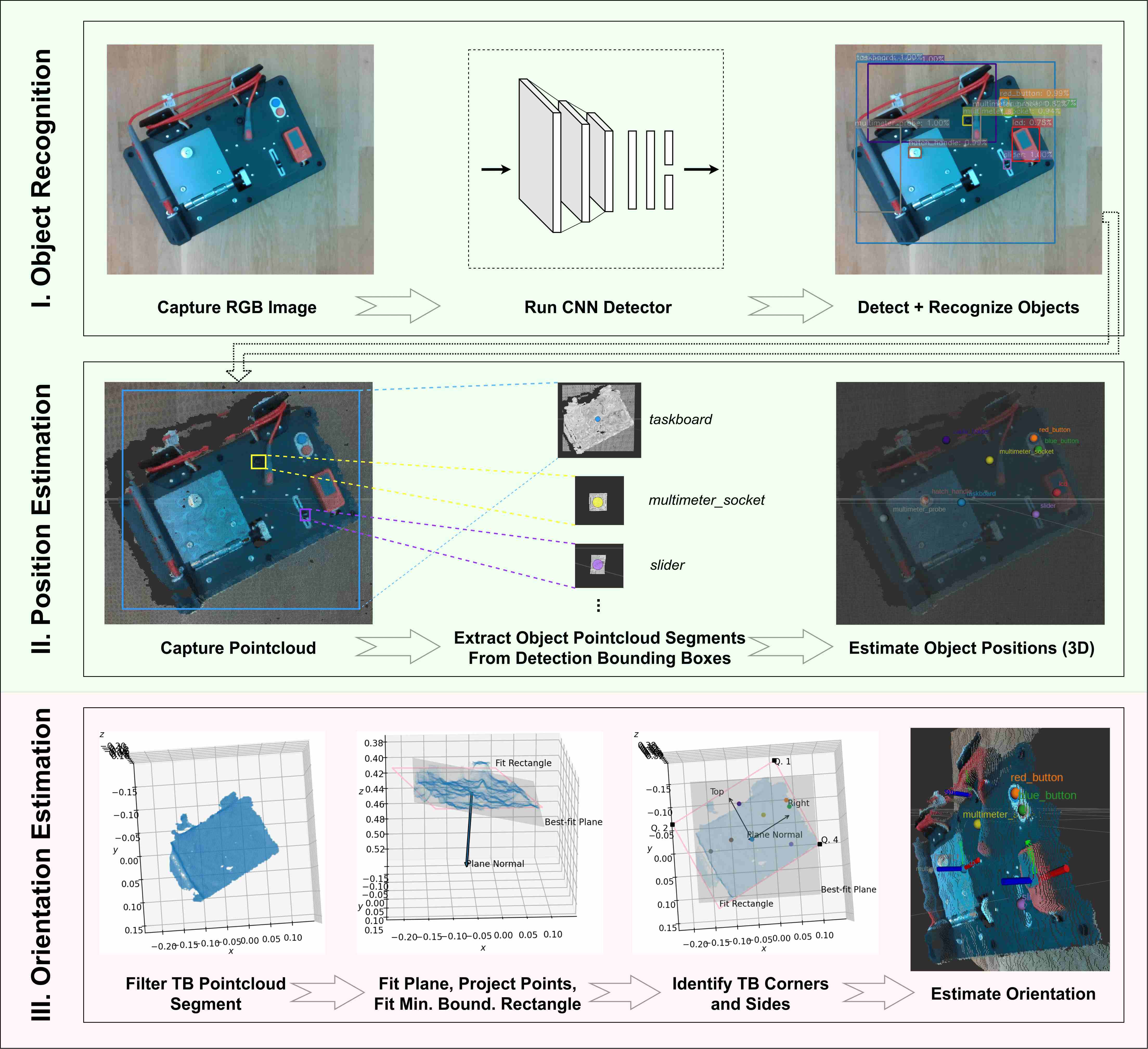

The pose estimator relies on data from an RGB-D sensor, such as an Intel Realsense camera, to determine the 6D pose of known objects and involves three stages:

- Object Recognition

- Position Estimation

- Orientation Estimation

For initial object recognition, a conventional CNN model is trained to detect known objects from RGB images. In this case, we used a pre-trained Faster R-CNN model which was fine-tuned using transfer learning: a relatively small set of images containing the objects of interest in varying conditions comprised the training dataset.

For position estimation, the resulting image detections and aligned depth images (point clouds) are then used to localize the detected objects in 3D space. We project all 3D depth points onto the 2D RGB image plane using central projection. Then, for each detected object’s bounding box, we find all 2D projected points that fall within its box; the corresponding unprojected 3D depth points then form the segment of the original point cloud that falls on the respective object. By taking the mean of each object’s point cloud segment, we obtain an estimate of its 3D position.

For orientation estimation, we currently implement object-specific, heuristic point cloud processing operations. In the case of the taskboard platform that we considered (and depicted in the figures above), we utilize a priori knowledge of the board’s geometry and the estimated component positions to determine its orientation. In future work, we will target a more object-agnostic orientation estimation method.

Software Framework

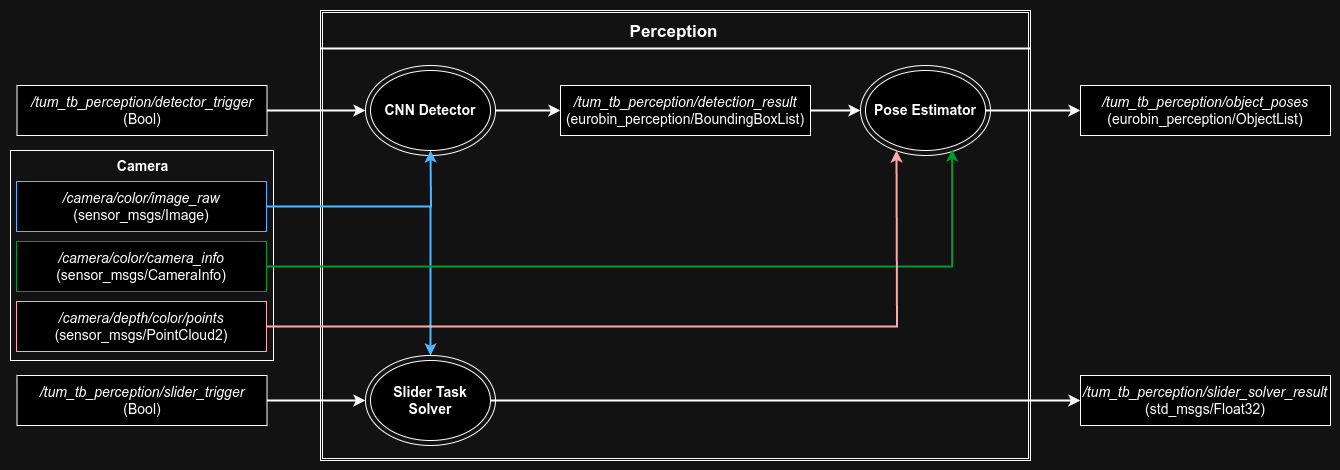

The pose estimator is implemented in Python within the ROS framework as a set of modular, extensible components that are depicted above. It is available in both ROS 1 (noetic) and ROS 2 (humble).

Talk at the European Robotics Forum 2025

This work was published in the Springer Proceedings in Advanced Robotics ((SPAR,volume 36)) and presented at the European Robotics Forum. I had the pleasure of being invited to give a talk and was nominated for a best paper award within the area of “AI for robotics”.

Conclusions and Future Work

This project produced a reusable, robot perception skill in the spirit of the euROBIN project’s objectives of the collaborative development of transferable robot software. Using the basic principles of transfer learning and direct depth data processing, this solution enables data-efficient object recognition and 3D position estimation, which are easily adaptable to any object(s) for similar manipulation tasks. The software package was designed to be easy to integrate in other perception stacks and to adapt and extend it where necessary. Its utility was demonstrated during euROBIN competitions in which multiple teams made use of its functionalities within their own frameworks.

In the future, I hope to develop this further into a general-purpose robot vision skill, starting with a more general, task-agnostic strategy for orientation estimation.